How Nvidia’s inference bet at GTC poses a challenge and opportunity for China

Nvidia’s Groq 3 LPU chip widens the AI gap with China, but offers Chinese firms niche inference market opportunities, analysts say

Nvidia’s latest language processing chip, unveiled at the company’s annual artificial intelligence conference, has opened a new frontier in the AI inference arms race, as the booming market for AI agents like OpenClaw presents a complex new reality for China’s semiconductor industry, according to analysts.

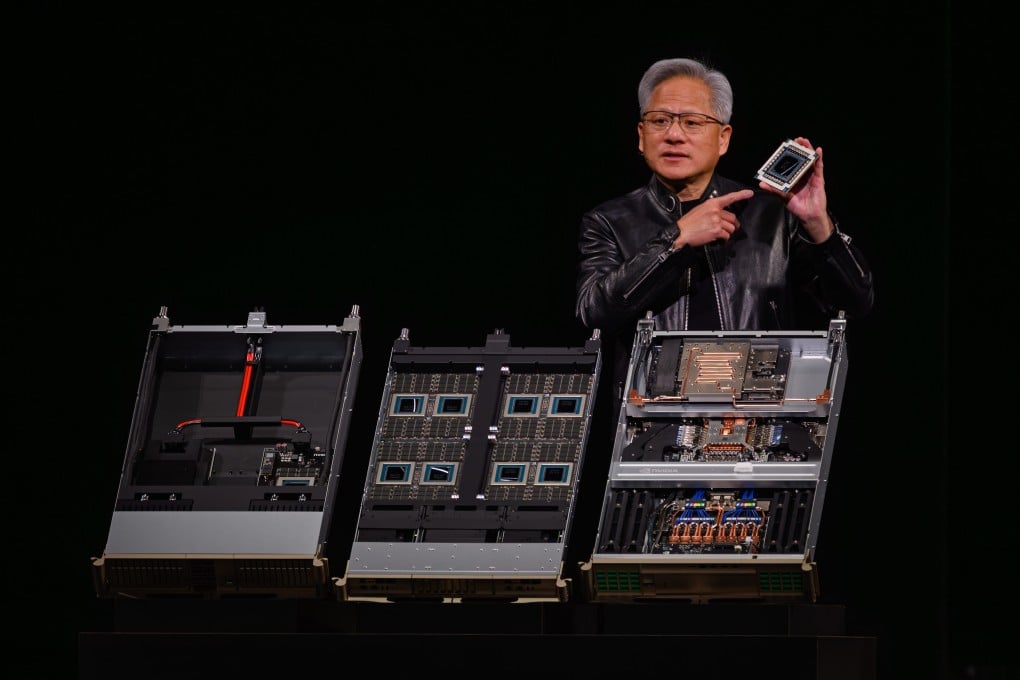

The Nvidia Groq 3 Language Processing Unit (LPU), introduced on Monday at GTC 2026 in San Jose, California, was described by the company as an accelerator with fast memory and low latency designed for agentic systems, which could perform real tasks and rely on inference workloads as “their fuel”.

By integrating the LPU into the Vera Rubin platform, Nvidia was moving from selling individual chips to selling “AI factories” – racks where central processing units (CPUs), graphics processing units (GPUs) and LPUs function together to “open the next frontier of agentic AI”, the company said in another statement. Nvidia introduced the Vera Rubin computing platform at GTC.

The gap between Nvidia and its Chinese rivals “is indeed widening, which has evolved from individual chip performance to a system-level dominance”, said Arisa Liu, chief director and research fellow at Taiwan Industry Economics Services, a unit of the Taiwan Institute of Economic Research.

“It appears that Chinese domestic chips now face a lag not merely in hardware specifications, but in the standardisation of the entire AI production pipeline,” Liu said.

However, the fragmentation of the AI inference market opened a window of opportunity for Chinese chipmakers, as “not all AI [workloads] will run in data centres”, Liu said.