OpenAI flagged Canadian school shooter months before massacre but did not alert police

Despite detecting a misuse of ChatGPT for ‘violent intent’, OpenAI felt the risk did not meet its bar for police referral

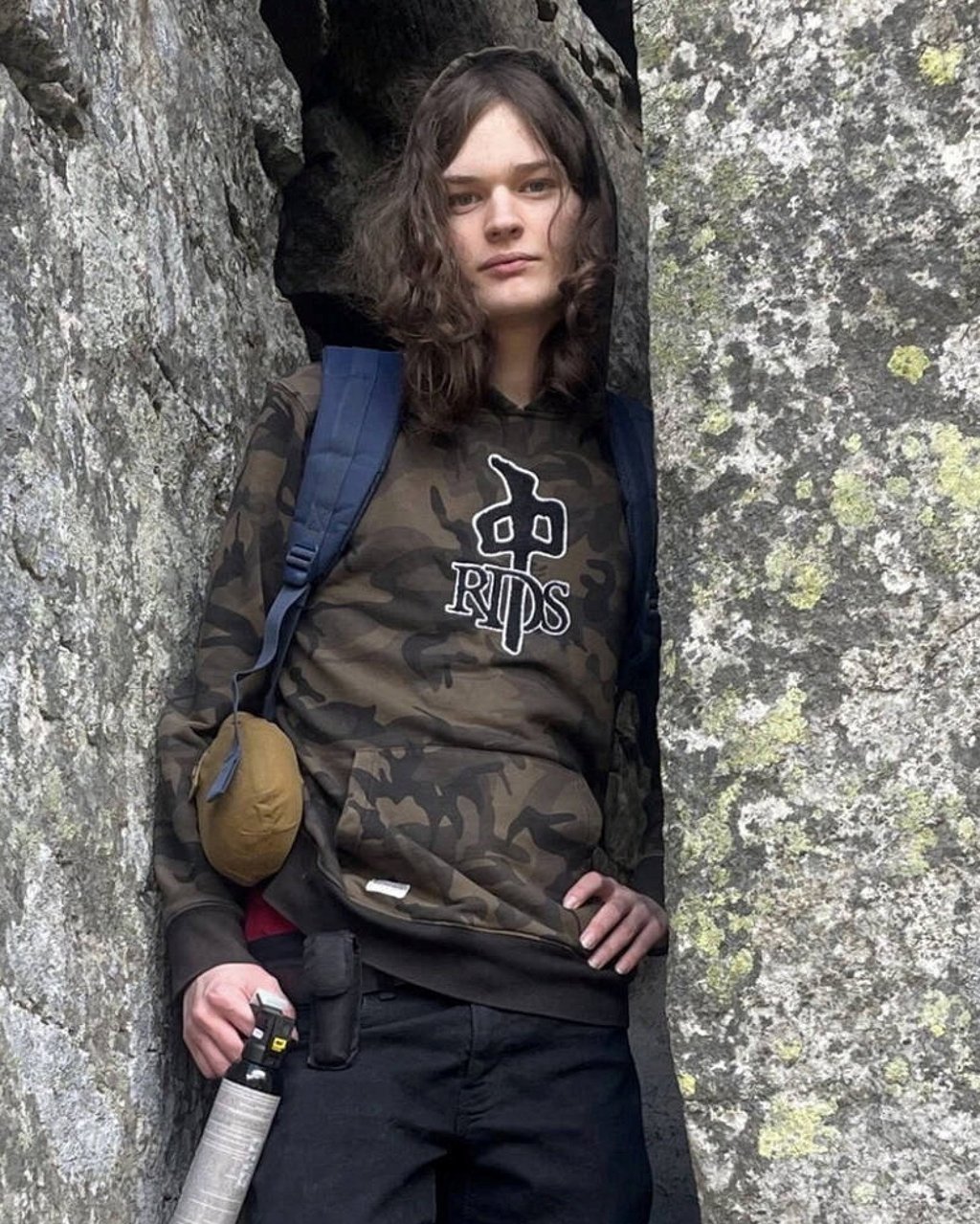

OpenAI said the company identified the account of Jesse Van Rootselaar last June via abuse detection efforts for “furtherance of violent activities”.

The San Francisco tech company said it considered whether to refer the account to the Royal Canadian Mounted Police but determined at the time that the account activity did not meet a threshold for referral to law enforcement. OpenAI banned the account in June 2025 for violating its usage policy.

OpenAI said the threshold for referring a user to law enforcement was whether the case involves an imminent and credible risk of serious physical harm to others. The company said it did not identify credible or imminent planning. The Wall Street Journal first reported OpenAI’s revelation.

OpenAI said that, after learning of the school shooting, employees reached out to the Canadian police with information on the individual and their use of ChatGPT.

“Our thoughts are with everyone affected by the Tumbler Ridge tragedy. We proactively reached out to the Royal Canadian Mounted Police with information on the individual and their use of ChatGPT, and we’ll continue to support their investigation,” OpenAI said in a statement.